What are Network Performance Metrics?

Learn about the most common metrics used for monitoring network performance

Network performance monitoring is the process used to track, evaluate, and diagnose the performance of a network. With the variety of devices, technologies, and network environments continuing to expand, the definition of optimal performance can vary significantly.

Network performance metrics are the measurable outputs that indicate how the infrastructure and services are operating as a part of short-term and long-term network performance evaluations. Real-time analysis of these metrics allows teams to identify potential problems on the network and prioritize IT resources and responses according to impact. Over time, network performance metrics support the understanding of end-user demands and help to build more adaptive networks that can meet present and future business needs.

The sheer size and complexity of modern networks can lead to monitoring challenges for network teams. Recent trends in network architecture have made pinpointing the source of internal and external issues a more daunting task.

Cloud Adoption

Cloud adoption has accelerated at a pace that has surprised even industry insiders. With over 90% of companies worldwide utilizing cloud services, visibility has become increasingly challenging. Proxy-in-the cloud solutions put the responsibility on network performance analysts to decipher millions of conversations between the cloud and the original host. The type of cloud service, be it SaaS, IaaS, or PaaS, dictates the best network performance evaluation methods and metric choices to assess cloud applications accurately.

The IoT and Device Overload

The Internet of Things (IoT) produces nearly 80 zettabytes (ZB) of data per year across over 18 billion (and counting) connected devices. New IoT devices for medical, manufacturing, and transportation applications carry high expectations for network performance along with unpredictable traffic patterns and dispersed deployments that can make network performance evaluation difficult. The infrastructure and edge computing locations connecting these disparate devices are being taxed beyond anything previously encountered. The variety of IoT formats and protocols also introduce complexity for network traffic baselining and prioritization methods that make advanced network performance metrics and tools essential.

Siloed NetOps and SecOps

Network Operations (NetOps) and Security Operations (SecOps) teams share the common objective of keeping network traffic flowing efficiently and securely. The siloing of these two important functions can lead to challenges in network performance monitoring and metric selection that impact mean time to identify (MTTI). With network speeds, cloud adoption, and encrypted data volumes all on the rise, visibility for both Ops categories has been hindered. These challenges that can be mitigated through network performance metrics and tools that provide a unified view of IT service health and foster a collaborative approach to issue response and remediation.

Skill and Resource Gaps

With IT teams and network engineers stretched to their limits, the addition of new data sources and metrics unrelated to the user experience can be counterproductive. Information overload and a lack of prioritization in network performance monitoring metrics also shifts more of the troubleshooting and analysis burden to Tier 3 engineers. This takes valuable time and resources away from strategic initiatives and technology deployments. The addition of Tier 1 and 2 resources is of little value without automated workflows and capabilities like connection dynamics and application dependency mapping to isolate the problem domain and root cause quickly and reliably.

The pace of network architecture transformation, coupled with an influx of new network performance monitoring tools, can lead to confusion and indecision. For any network monitoring program, logical best practices should be implemented before network performance metrics are selected and finalized.

Baselining the Network

A network performance baseline is the set of metrics used to define normal working conditions on the network infrastructure. This baseline information is essential for setting standards against which future performance data will be compared and performance degradations will be defined. Careful analysis of traffic flow and utilization patterns are among the elements of reliable baselining.

Reviewing Network Infrastructure

As architecture becomes more complex, understanding the underlying composition of the network in detail is another essential best practice. Traditional infrastructure, consisting of hubs, switches, routers, and workstations should be comprehensively indexed. This level of detail should apply to all devices and applications running on the network, as well as wireless networks, wide area networks (WANs), local area networks (LANs), virtual LANs, and cloud applications. Anything that touches an application, either directly or indirectly, can impact the user experience.

Tool Selection

Performance monitoring tool selection is another area where planning and best practice implementation can lead to greater efficiency. Tools should be selected based on their compatibility with the network configuration and metrics of interest. Integrated dashboards that display historical information and real-time trends, including shifts in bandwidth utilization, expiring digital certificates, Unified Communications performance, and other key performance metrics in computer networks help both SecOps and NetOps teams stay one step ahead of important issues and accelerate root cause analysis.

Data fidelity is a quality shared by the best network performance analysis tools. Fidelity encompasses the accuracy, timeliness, completeness, and reliability of the data being translated into actionable reporting and alerts at the GUI level. A network performance analyzer with superior precision and unabridged packet capture capabilities can drive down mean time to resolve (MTTR) while improving the end user experience.

Increased network size, speed, and diversity can make it difficult to determine which network performance metrics are most useful for assessing system performance, and what relative weights these metrics should be assigned. Carefully selected metrics can lead to improved network availability, optimized traffic flow, reduce operational costs, and improved quality and security on a continual basis.

Channel Utilization

One effective measure of network efficiency is channel utilization, which is the fraction of communication channel transmission capacity that contains data packets. Increased utilization rates can detrimentally affect link and application throughput and performance. By monitoring utilization continually, with granularity in the millisecond range to capture elusive spikes, congestion issues can be anticipated and network expansion can be planned proactively. Increased utilization in the short-term can also indicate serious security or performance issues.

TCP Retransmission

Transmission Control Protocol (TCP) retransmission rate is an indicator of packet loss and an effective network performance metric, since retransmissions beyond a small percentage will lead to degraded application performance. Packet loss can occur when buffers are inadequate, or when packets arrive to find a full buffer and are discarded. Packets are also subject to damage or loss due to software bugs and configuration errors. If these issues are resolved through retransmission, the problem can be amplified rather than corrected.

Round Trip Time

The round-trip time (RTT) is a measure of the amount of time it takes for a server to respond to a client packet, typically measured in milliseconds. The RTT can range from just a few milliseconds, under optimal conditions, to several seconds when network problems have developed. Bandwidth limitations, security issues, excessive traffic, and hardware problems can all cause increased RTT, so baselining this metric and including it in your network performance analysis can help to identify threats and performance degradations in real-time.

Jitter

Jitter is the variation in delay (latency) for received packets that is experienced when some packets take longer to travel between the same two points in a network. The causes of jitter include network congestion, timing drift, and route changes. In real-time applications such as Unified Communications (UC), excessive jitter can lead to audio and/or video artifacts that degrade quality and user satisfaction. Since jitter is a commonly observed network phenomenon, tracking the frequency of successive pulses is a valuable practice.

Latency

Latency is a network performance metric quantified in units of time and refers to any form of delay in communication that occurs over a network. This metric is tracked bi-directionally between the host and the server. Potential contributing factors to network latency include security processes, router errors, an excessive number of hops in hybrid networks, and software malfunctions. Beyond a perceptible threshold, latency can significantly impact the overall user experience.

End-User Experience Score

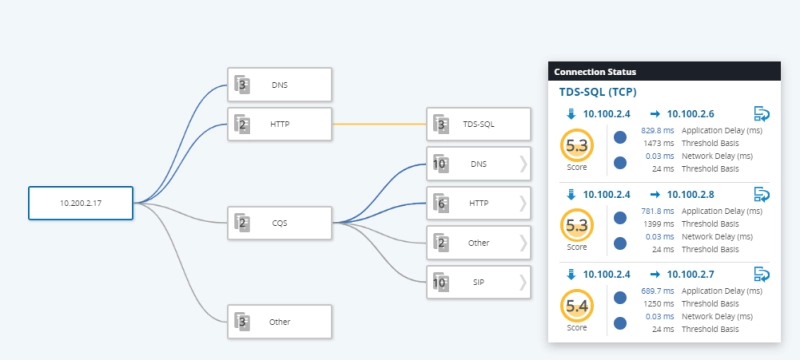

End-user experience (EUE) scoring is an invaluable network performance metric, as the numeric score provides a comprehensive rating from the user perspective. An intuitive 0-10 relative value, informative problem descriptions, performance visualizations, and domain isolation provide real-time information for every user transaction. Machine learning and advanced algorithms are used to interpret a wide variety of network conditions from the user perspective. The most pertinent standard network performance metrics are distilled into a single value that helps to accelerate issue diagnosis and resolution.

Modern medical technology provides real-time data to physicians for virtually every bodily system and function. Despite this impressive byproduct of modern medicine, “how do you feel” remains the most important question in the medical profession today, as always.

This same concept can be applied to performance measuring metrics in computer networks. With thousands of potential network performance metrics and KPIs available for monitoring, the amount of data can be overwhelming. Focusing on the user first is the best way to ensure value-added practices equate to satisfied customers by:

- Clearly visualizing service delivery and the quality of the end-user experience

- Quantifying the scope, severity, and impact of service-affecting issues

- Automating problem domain isolation to expedite troubleshooting

- Detecting performance degradations before they escalate into significant problems

Data of any kind becomes more relevant within the context of user experience and satisfaction, regardless of the numeric improvement observed. Real user-provided input on quality of experience (QoE), the analysis of simulated user transactions, and selectively tailored metrics that coincide with the outputs deemed most essential to EUE help to make network data sources more useful.

Traditional metrics for network performance like latency, packet loss, channel utilization, and jitter become increasingly valuable when performance thresholds correspond to real-world performance degradation. Observer Apex provides individually and logically grouped experience scores across multiple locations, protocols, and domains. Unique to VIAVI, it’s the first multi-dimensional score to quantify the impact of performance based on the experience of the end user. This eliminates guesswork and inefficient toggling between multiple network performance monitoring metrics in search of answers.

The Cloud, Gigabit Internet, and IoT have quickly moved from the drawing board to the real world on a massive scale. While these developments continue to enable new applications that enhance our lives in countless ways, the challenges in identifying effective network performance metrics have multiplied along with the technical complexity.

Focusing on the end user experience has always been the best way to prioritize the overwhelming number of available metrics, KPIs, and other data sources. The user is perhaps the most valuable real-time monitoring solution of all. Whether you are encountering a split-second performance blip or a slowly evolving trend of degradation, tying these observations to the user experience can improve baseline accuracy and response times while helping to predict and eliminate unwanted events in the future.

Continue your Network Performance education with VIAVI!

Are you ready to take the next step with one of our network performance monitoring products or solutions? Complete one of the following forms to continue: