Monitoreo del Desempeño de la Red

Monitoreo proactivo para mejorar el estado de la red y la capacidad de observación

Domina el desempeño de la red: un enfoque sencillo para abordar entornos de TI complejos

Los equipos de red a menudo se ven sobrepasados al intentar ir por delante de un sinfín de quejas, registros y reportes. La dificultad clave radica en localizar la fuente real de los problemas de la red, especialmente en unos tiempos en los que dominan las redes descentralizadas, el Internet de las cosas (IoT), las redes SD-WAN y las configuraciones por capas de soluciones basadas en la nube privada e híbrida.

Con el auge de los modelos de teletrabajo, los empleados que trabajan en remoto en diversas ubicaciones utilizan a menudo aplicaciones en la nube y eluden con frecuencia la ruta tradicional del centro de datos. Este cambio subraya la urgencia de un monitoreo exhaustivo de la infraestructura y una visión más profunda de la experiencia de los usuarios remotos.

El panorama en desarrollo del monitoreo y desempeño de la red (NPM), así como de su gestión, está determinado por tendencias clave impulsadas por una creciente complejidad.

Entre estas tendencias se incluyen las siguientes:

- Adaptación a la expansión de la red y ubicaciones: a medida que la red se amplía, el panorama del alojamiento ahora abarca puntos geográficos externos y multinube junto con implementaciones tradicionales. Esto exige un enfoque dinámico en cuanto al monitoreo que se adapte a las diferentes necesidades de los distintos entornos de alojamiento.

- Tratamiento de los desafíos de visibilidad con usuarios remotos y teletrabajo: el auge de los usuarios remotos y de aquellos que trabajan desde casa ha originado desafíos de visibilidad únicos. A menudo, acceden a los recursos de red fuera del entorno empresarial tradicional y se conectan directamente a las aplicaciones basadas en la nube, eludiendo con frecuencia los centros de datos tradicionales.

- Aprovechamiento del software como servicio (SaaS): el SaaS ofrece un modelo práctico basado en la nube para proporcionar software de aplicaciones, servicios de configuración y actualizaciones automatizadas a los consumidores y las empresas. Esta tendencia beneficia a los usuarios por su facilidad de implementación y sus características de seguridad avanzada, además de garantizar niveles sólidos de desempeño y seguridad de la red en un mundo centrado en la nube.

Enfoque de VIAVI: optimización del desempeño de la red con herramientas de NPM innovadoras

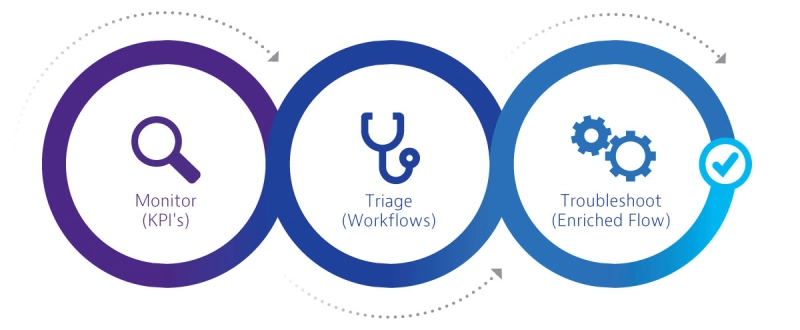

Las herramientas de monitoreo y desempeño de la red (NPM) de VIAVI están adaptadas a una resolución de problemas proactiva, con lo que combinan una puntuación de la experiencia del usuario final avanzada, diagnósticos lógicos y flujos de trabajo intuitivos. El almacenamiento completo de todos los paquetes y datos de flujo ofrece el análisis detallado necesario para realizar investigaciones forenses exhaustivas. Esto permite a los equipos de TI detectar y abordar problemas de desempeño de la red e implementar medidas correctivas antes de que se produzca alguna interrupción significativa de la actividad.

En tiempos en los que la innovación tecnológica, la migración estratégica a la nube y la transformación digital son vitales para que las empresas sigan siendo competitivas, los equipos de TI tienen la labor de encontrar la justa medida entre la innovación empresarial y la excelencia operativa. Nuestras soluciones de NPM ayudan a conseguir la eficiencia operativa y permiten a los equipos de TI centrarse en la innovación digital y en estrategias proactivas.

Además, este enfoque incluye la gestión de las operaciones cotidianas y la mitigación de los riesgos de eventos, tanto planificados como no planificados. Proporciona a los equipos de TI las herramientas necesarias para identificar con celeridad los problemas de desempeño, con lo que se mejora su capacidad para implementar rápidamente soluciones eficaces. Las soluciones permiten también conocer cuál es la situación en toda la empresa y observar en tiempo real el estado de la red, lo que ofrece una visibilidad básica para mitigar los riesgos relacionados con la expansión de la red y con desafíos imprevistos.

El valor de VIAVI: transformación de la gestión del desempeño de red con soluciones especializadas

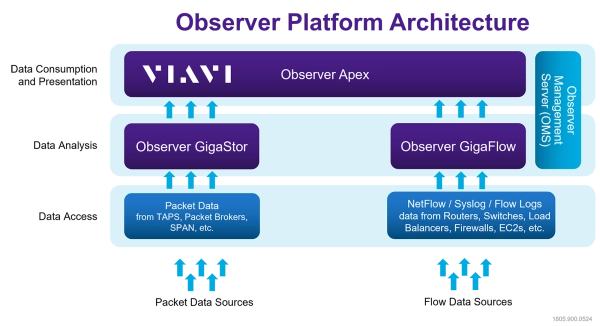

La plataforma Observer de VIAVI, líder en el sector de las soluciones de NPM para empresas, representa un avance significativo en la gestión de redes. Cuenta con flujos de trabajo predefinidos y sofisticados, así como paneles de alto nivel ideales para entornos de red complejos. Con su puntuación de la experiencia del usuario final (EUE) automatizada y con tecnología de aprendizaje automático, proporciona capacidad de observación en tiempo real del desempeño de la red, lo que permite a los equipos de TI relacionar directamente el comportamiento de la red con los efectos reales para los usuarios.

Estas soluciones pueden ayudar a identificar y superar desafíos clave de visibilidad de red, integración tecnológica y gestión de activos, todo ello perfectamente en línea con los objetivos estratégicos de la empresa. Esta fusión de funciones avanzadas y diseño centrado en la empresa es un ejemplo del compromiso de VIAVI de mejorar la gestión del desempeño de red en entornos organizativos diversos.

Observer proporciona una visibilidad detallada del estado y el desempeño de herramientas de TI básicas, y se centra en el monitoreo de redes desde la perspectiva del usuario final. Este enfoque único permite a los equipos de red y de operaciones garantizar una entrega de aplicaciones perfecta y servicios de TI sólidos. A continuación, se proporcionan tres casos prácticos orientados a mejorar la experiencia del usuario y la funcionalidad de la red que reflejan cómo esta estrategia se adapta a objetivos de alto nivel en materia de capacidad de observación y desempeño:

- Gestión de la entrega de servicios empresariales y adopción y migración a la nube: la plataforma Observer mejora la visibilidad de la experiencia del usuario final en aplicaciones vitales, entornos de trabajo remotos e implementaciones modernas en la nube. Esto permite a los equipos de TI adoptar una postura más proactiva para facilitar una mejor prestación de servicios en la nube y reforzar la seguridad en entornos híbridos.

- Mitigación de riesgos derivados de cambios y eventos inesperados: las funciones de monitoreo del desempeño de la red de Observer son esenciales para ayudar a reducir los riesgos, la frecuencia y las consecuencias de los eventos inesperados y los cambios continuos, lo que incluye desafíos como retrasos en proyectos, problemas de cumplimiento normativo y medidas correctivas relacionadas con la seguridad. Un aspecto clave de esta estrategia es la evaluación y la comparativa de la nube antes y después de la migración.

- Solución de problemas de desempeño y ciberseguridad: Observer de VIAVI supera los puntos ciegos que provocan las aplicaciones alojadas en la nube y simplifica la identificación del origen, del grado y de las consecuencias de los problemas de seguridad y desempeño. Contrarresta esta problemática apartándose de respuestas aisladas y comentarios subjetivos de los usuarios en favor de la visibilidad de la red de extremo a extremo e inteligencia predictiva.

Documentos técnicos y bibliografía

Vídeo

Seminario en línea

Déjenos ayudarle

Estamos a su disposición para ayudarle a avanzar.