超大规模

测试挑战、资源和解决方案

超大规模生态系统和 VIAVI

VIAVI Solutions 是三十多个标准团体和开源项目的积极参与者,包括电信基础设施项目 (TIP)。但是,当标准进展不够快时,我们会预测并开发设备来测试不断发展的基础设施标准。 我们相信开放式 API,因此超大规模公司可以继续编写自己的自动化代码

超大规模架构的复杂性使得测试在网络建设、扩展和监控阶段至关重要。数十年的创新、伙伴关系以及与 4000 多家全球客户和标准机构(如 FOA)的合作,使 VIAVI 具备了应对超大规模用户所面临的独特测试和保障挑战的独特资格。我们保证光学硬件在数据中心生态系统的整个生命周期中的性能,从实验室到开通再到监控。

什么是超大规模?

超大规模指的是用于创建具有高度适应性的计算系统的硬件和软件架构,该系统具有大量联网的服务器。IDC 提供的定义包括在一万平方英尺或更大的占地面积上至少有五千台服务器。

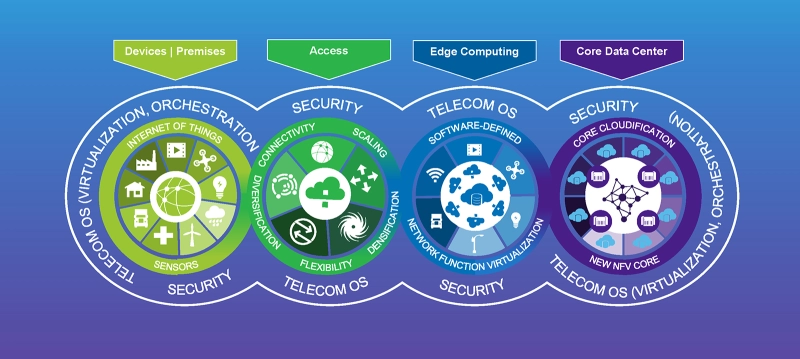

水平扩展(横向扩展)通过部署或激活更多服务器来快速响应需求,而垂直扩展(纵向扩展)则提高现有硬件的功率、速度和带宽。超大规模技术提供的高容量非常适合云计算和大数据应用。软件定义的网络 (SDN) 是另一个基本要素,此外还有专门的负载平衡来引导服务器和客户端之间的流量。

超大规模资源

- 超大规模是如何工作的?

超大规模解决方案通过旨在提高灵活性和可扩展性的大规模服务器阵列和计算流程来优化硬件效率。负载平衡器持续监控每个服务器的工作负载和容量,以便适当地路由新请求。人工智能 (AI) 可以应用于超大规模存储、负载平衡和光纤传输过程,以优化性能并关闭闲置或未充分利用的设备。 - 超大规模的优势

与超大规模架构相关联的规模提供了多种优势,包括高效的冷却和电力分配、跨服务器的平衡工作负载和内置冗余。这些属性还使得存储、定位和备份大量数据变得更加容易。对于客户公司来说,利用超大规模提供商的服务可能比购买和维护自己的服务器更具成本效益,而且停机风险更低。这可以显著减少内部 IT 人员的时间需求。 - 超大规模面临的挑战

超大规模技术提供的规模经济也可能带来挑战。高流量和复杂的流程会给实时数据监控带来困难。光纤和连接的速度和数量也会使外部流量的可见性变得复杂。由于数据的集中增加了任何违规行为的影响,安全性问题变得更加突出。自动化和机器学习 (ML) 是用于提高超大规模提供商的可观察性和安全性的两种工具。

超大规模模型

将内存、存储和计算能力分配与不断变化的服务需求精确匹配的能力是超大规模数据中心区别于传统数据中心模型的一个特征。虚拟机 (VM) 技术允许软件应用程序从一个物理位置快速移动到另一个物理位置。高密度服务器、用于提高冗余度的并行节点以及持续监控是其他普遍特征。由于没有一个单一的架构或服务器配置是理想的,因此正在开发新的模型来满足不断变化的运营商、服务提供商和客户需求。

- 什么是超大规模云?

互联网和云计算重塑了用户访问应用程序和数据存储的方式。通过将这些功能从本地服务器转移到由大型云服务提供商 (CSP) 管理的远程数据中心,企业可以按需扩展基础架构,而不会影响内部运营。超大规模云提供商为传统云产品(如软件即服务 (SaaS)、基础设施即服务 (IaaS) 和数据分析)增加了超大规模的数据中心容量和弹性。 - 超大规模与云计算

尽管这两个术语有时可以互换,但云部署并不总是达到如此大的规模,超大规模数据中心可以支持的远不止云计算。用于通过互联网向多个客户交付资源的公共云(云即服务)通常属于超大规模定义的范畴,尽管私有云部署可能相对较小。当组织为满足自身的计算和存储需求而构建和运营的企业数据中心整合了高服务器容量和高级编排功能时,其结果就是所谓的企业超大规模。 - 超大规模云提供商

超大规模云提供商在基础设施和知识产权 (IP) 方面投入巨资,以满足客户需求。随着客户寻求跨标准化平台的可移植性,互操作性变得越来越重要。Synergy Research Group 的数据显示,最大的三家公司:Amazon Web Services (AWS)、Microsoft 和 Google 占据了全部安装量的一半以上。这三家提供商还领导着超大规模边缘数据中心的规划和开发工作,以满足不断发展的 5G 和物联网需求。 - 超大规模主机托管

主机托管允许企业租用现有数据中心的一部分,而不是建立一个新的数据中心。当零售和批发商等相关行业处于同一屋檐下时,超大规模主机托管可以创造有用的协同效应。每个租户的电力、冷却和通信总成本降低,同时正常运行时间、安全性和可扩展性显著提高。超大规模公司还可以通过向租户出租过剩产能来提高效率和盈利能力。 - 超大规模架构

尽管超大规模架构没有固定的定义,但其共同特征包括 Pb 级(或更高)存储容量、执行复杂负载平衡和数据传输功能的离散软件层、内置冗余和商用服务器硬件。与小型私有数据中心使用的服务器不同,超大规模服务器通常使用更宽的机架,并且设计为更易于重新配置。这种功能组合有效地消除了硬件的智能,而是依靠高级软件和自动化来管理数据存储和快速扩展应用。 - 超大规模与超融合

与将计算与存储和网络功能分开的超大规模架构不同,超融合架构将这些组件集成到预打包的模块化解决方案中。借助在虚拟环境中创建的软件定义的元素,超融合构建块的三个组件无法分离。超融合架构使得通过单一接触点快速扩大或缩小规模变得更加容易。其他优势包括捆绑压缩、加密和冗余功能。

超大规模连通性

随着超大规模模型变得更加分布式,边缘计算和多云策略越来越常见。必须确保数据中心内的物理连接以及无缝集成超大规模位置的高速光纤链路的连通性。

自动 MPO 连接器检测在几秒钟内完成、多个 400G 或 800G 端口的同时测试,以及虚拟服务激活和监控是跟上不断发展的超大规模互连架构所需的一些测试功能。

超大规模测试实践

许多针对光纤连接、网络性能和服务质量的超大规模测试实践与传统数据中心测试保持一致,只是规模大得多。即使随着测试复杂性的增加,正常运行时间的可靠性也变得更加重要。应对接近满负荷运行的 DCI 进行一致的测试和监控,以验证吞吐量并在故障发生前发现潜在问题。应该使用自动监控解决方案来最大限度地减少资源需求。

数据中心硬件和软件的定制使得互操作性对于高级测试解决方案至关重要。这包括支持开放式 API 以适应超大规模多样性的测试工具。像 PCIe 和 MPO 这样支持高密度和高容量的通用接口越来越受欢迎,需要解决方案来有效地测试和管理它们。

- 像 MAP-2100 这样的比特误码率测试仪是专门为很少或没有人员进行网络测试的环境开发的。

- 面向超大规模生态系统的网络监控解决方案可以从多个物理或虚拟接入点灵活地启动大规模性能监控测试。

- 为 MPO 和带状光纤等元件定义的测试最佳实践可以在部署中大规模应用。

超大规模解决方案

新的数据中心安装不断增加规模、复杂性和密度。最初为其他高容量光纤应用开发的测试解决方案和产品也可用于验证和维护超大规模性能。

- 光纤端面检测:数据中心内部和之间的大量光纤连接需要可靠高效的光纤端面检测工具。单个颗粒、缺陷或污染的端面都可能导致插入损耗和网络性能受损。超大规模应用的最佳光纤端面检测工具包括紧凑的外形、自动化检测程序和多光纤连接器兼容性。

- 光纤监控:超大规模数据中心行业中光纤的增加使得光纤监控成为一项困难但重要的任务。用于连续性、光损耗测量、光功率测量和 OTDR 的多功能测试工具必不可少。这适用于构建、激活和故障排查活动的所有阶段。像 ONMSi 远程光纤测试系统 (RFTS) 这样的自动化光纤监控系统可以提供可扩展的集中式光纤监控,并提供即时警报。

- MPO:多芯推进式 (MPO) 连接器曾主要用于密集干线电缆。如今,超大规模密度限制导致接线板、服务器和交换机连接迅速采用 MPO。通过专用的 MPO 测试解决方案,可以加速光纤测试和检测,并自动得出通过/未通过结果。

- 虚拟测试:曾经需要现场网络技术人员的便携式测试仪器现在可以通过 Fusion 测试代理进行虚拟操作,以减少资源需求。基于软件的 Fusion 平台可用于监控网络和验证 SLA。以太网激活任务,如 RFC 6349 TCP 吞吐量测试,也可以虚拟启动和执行。

我们提供什么

多样化的 VIAVI 测试产品涵盖了超大规模建设、激活和维护的所有方面和阶段。在向更大、更密集的数据中心和边缘计算过渡的过程中,光纤连接器检测一直是整体测试策略的重要组成部分。

- 认证:MPO 连接器应用的快速扩展使 FiberChek Sidewinder 成为自动化多光纤端面认证的理想解决方案。专门为 MPO 接口设计的光损耗测试装置 (OLTS ),如 SmartClass Fiber MPOLx,也使层一光纤认证更容易、更可靠。

- 高速测试:必须快速准确地执行光传输网络 (OTN) 测试和以太网服务激活,以支持超大规模的高速连接:

- T-BERD 5800 100G(或北美市场以外的 MTS-5800 100G)是业界领先的加固型紧凑型双端口 100G 测试仪器,用于光纤测试、服务激活和故障排查。该多功能工具可用于城域/核心应用以及 DCI 测试。

- MTS-5800 通过可重复的方法和程序支持操作的一致性。

- 多功能、支持云的 OneAdvisor-1000 通过全速率和协议覆盖、PAM4 本机连接以及针对 400G 和传统技术的服务激活测试,将高速测试提升到一个新的水平。

- 多纤一体式:超大规模架构为通过 MPO 连接进行自动 OTDR 测试创造了理想的环境。多光纤 MPO 开关模块是一种一体化解决方案,适用于以 MPO 为主的高密度光纤环境。当与 MTS 测试平台结合使用时,无需耗时的扇出/分支电缆,即可通过 OTDR 对光纤进行表征。可同时对多达 12 根光纤执行自动化认证测试工作流程。

自动化测试:测试过程自动化 (TPA) 减少了超大规模构建时间、手动测试过程和培训时间。自动化可实现超大规模数据中心之间的高效吞吐量和 BER 测试,以及复杂 5G 网络切片的端到端验证。

- SmartClass Fiber MPOLx 光损耗测试装置通过原生 MPO 连接、自动化工作流程和链路两端的完全可见性,将 TPA 提升至层一光纤认证。不到 6 秒时间便可获得全面的 12 根光纤测试结果。

- 手持式 Optimeter 光纤测量仪可在不到一分钟的时间内完成全自动一键式光纤链路认证,使“免测试”选项变得无关紧要。

- 独立的远程光纤监控:先进的远程测试解决方案是可扩展、无人值守超大规模云设置的理想选择。借助 光纤监控,检测到的事件(包括退化、光纤窃听或入侵)可迅速转化为警报,从而保障 SLA 合同和 DCI 正常运行时间。ONMSi 远程光纤测试系统 (RFTS) 执行持续的 OTDR“扫频”,以准确检测和预测整个网络中的光纤退化。超大规模数据中心的运营支出、MTTR 和网络停机大幅减少。

- 可观察性和验证:为 5G 实现超大规模云和边缘计算的相同机器学习 (ML)、人工智能 (AI) 和网络功能虚拟化 (NFV) 突破也在推动高级超大规模测试解决方案。

应用指南

手册

我们倾力相助

我们倾力相助,为您获得成功加油。